diff --git a/.gitignore b/.gitignore

index 987f0547..7a9c92b8 100644

--- a/.gitignore

+++ b/.gitignore

@@ -145,3 +145,4 @@ cradle*

debug*

private*

crazy_functions/test_project/pdf_and_word

+crazy_functions/test_samples

diff --git a/README.md b/README.md

index 9f0c4893..67aed4c3 100644

--- a/README.md

+++ b/README.md

@@ -32,20 +32,20 @@ If you like this project, please give it a Star. If you've come up with more use

一键中英互译 | 一键中英互译

一键代码解释 | 可以正确显示代码、解释代码

[自定义快捷键](https://www.bilibili.com/video/BV14s4y1E7jN) | 支持自定义快捷键

-[配置代理服务器](https://www.bilibili.com/video/BV1rc411W7Dr) | 支持配置代理服务器

-模块化设计 | 支持自定义高阶的函数插件与[函数插件],插件支持[热更新](https://github.com/binary-husky/chatgpt_academic/wiki/%E5%87%BD%E6%95%B0%E6%8F%92%E4%BB%B6%E6%8C%87%E5%8D%97)

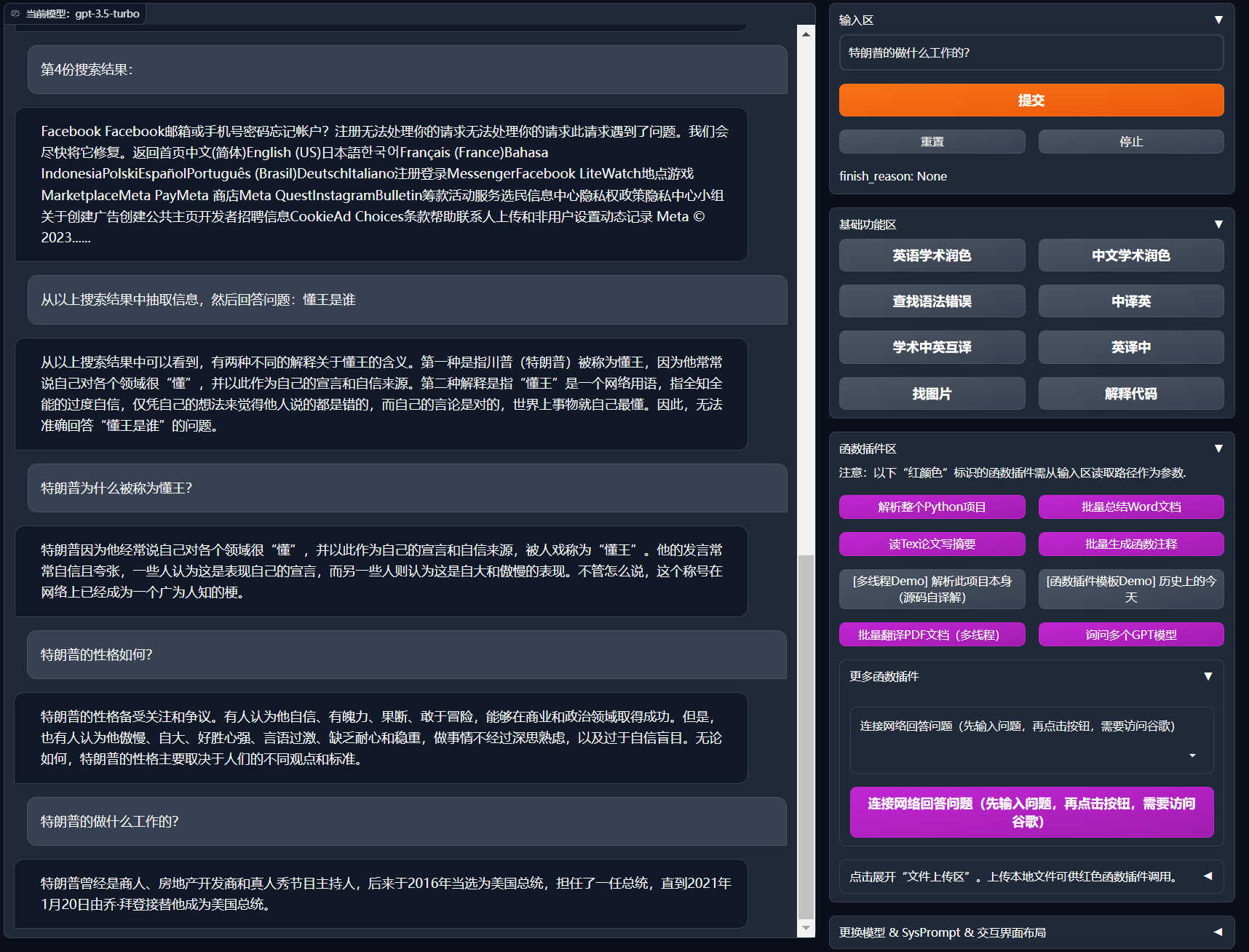

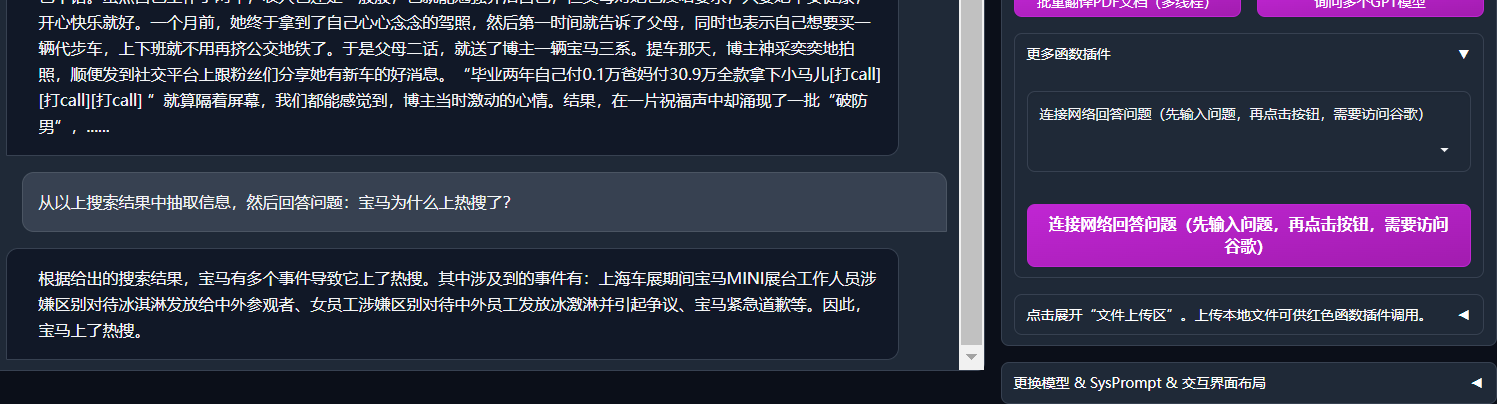

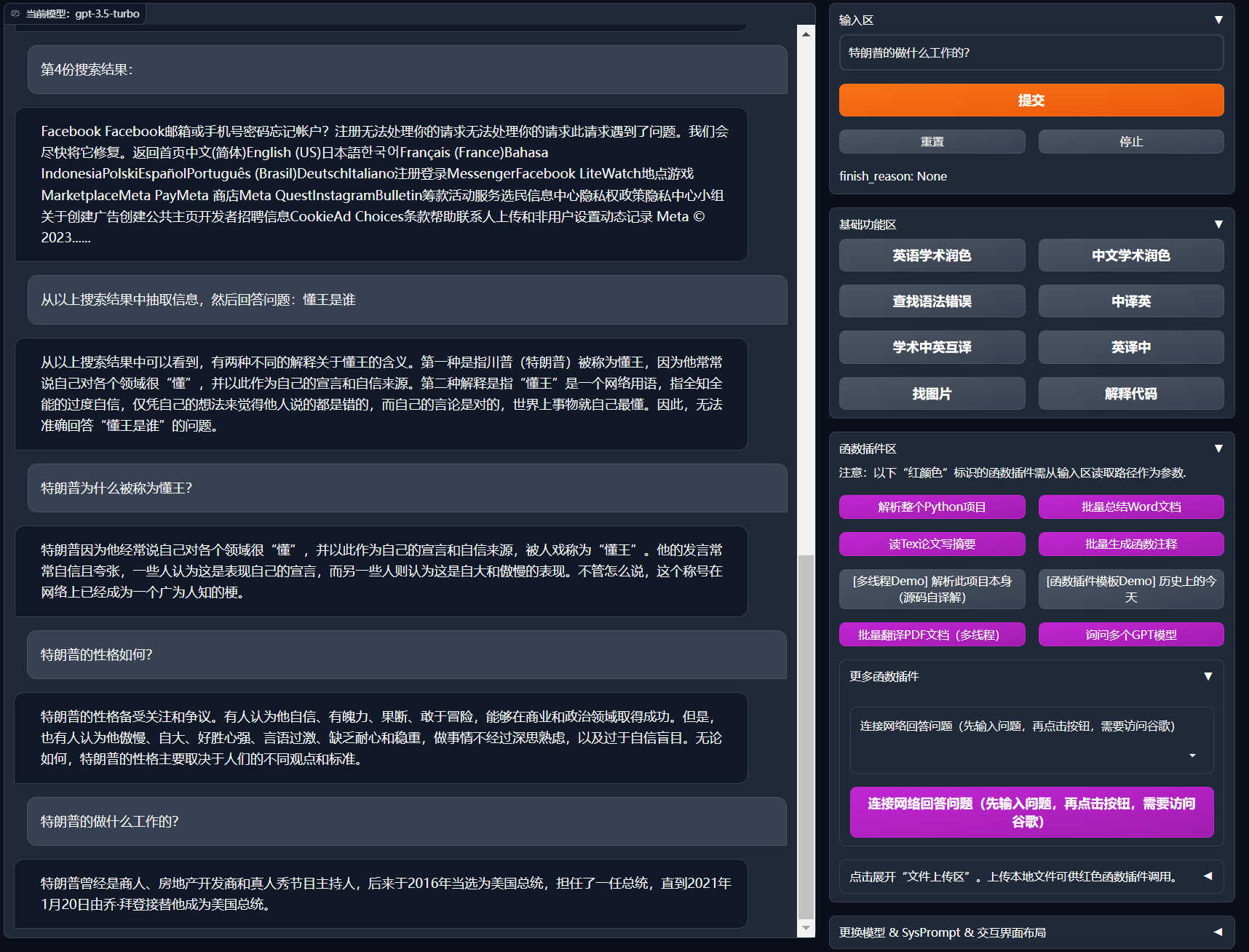

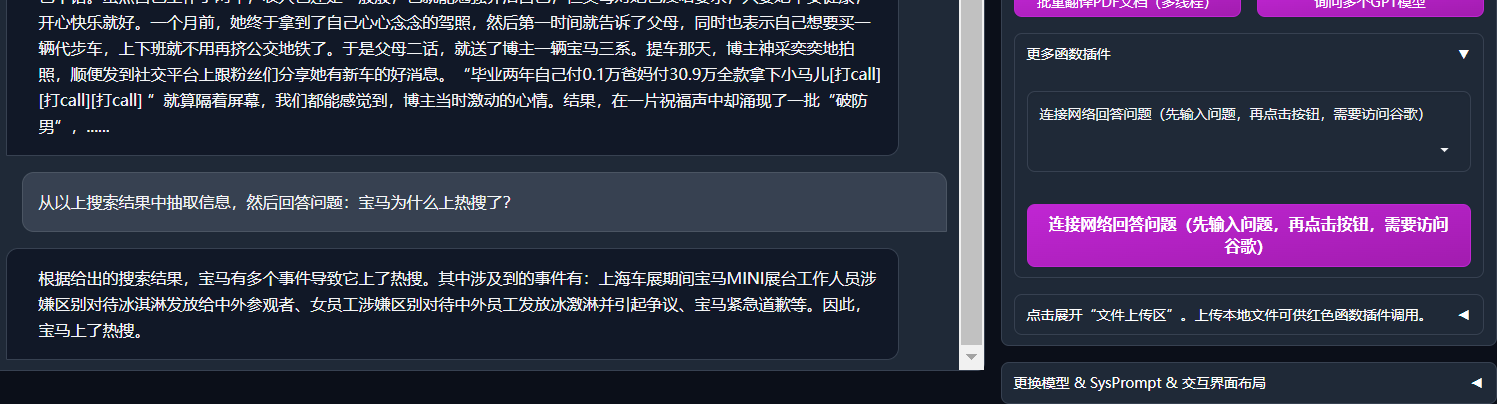

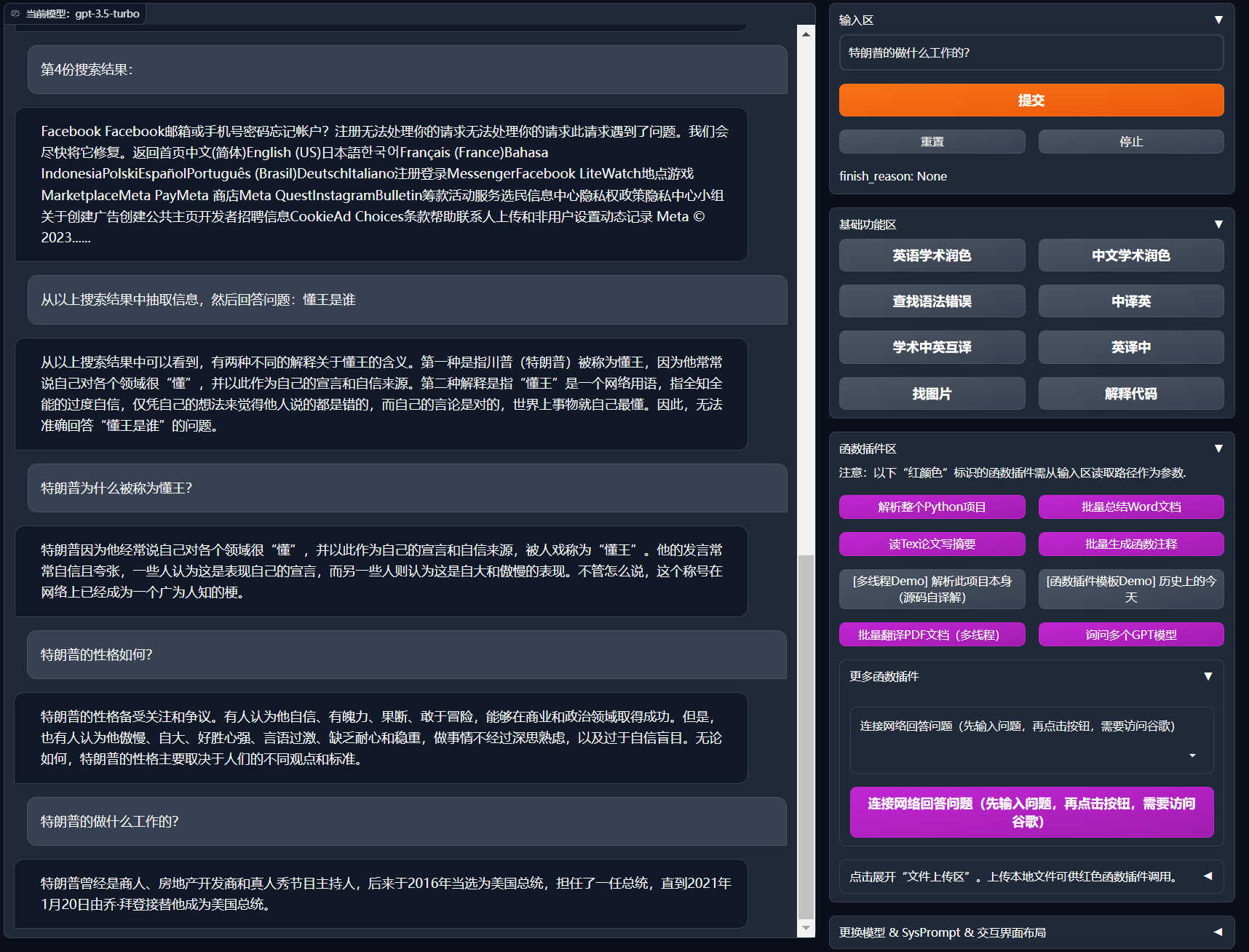

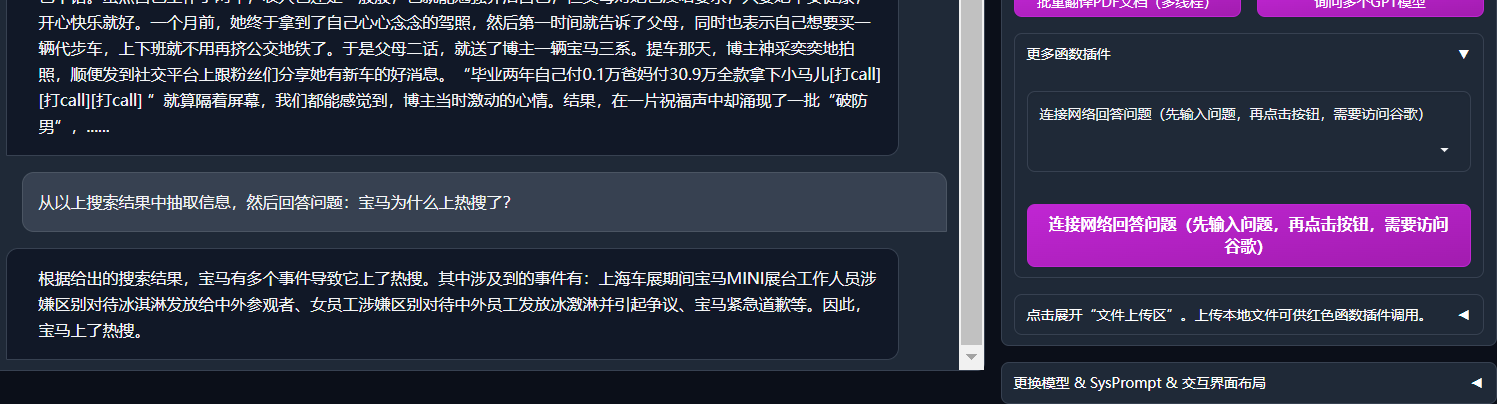

+[配置代理服务器](https://www.bilibili.com/video/BV1rc411W7Dr) | 支持代理连接OpenAI/Google等,秒解锁ChatGPT互联网[实时信息聚合](https://www.bilibili.com/video/BV1om4y127ck/)能力

+模块化设计 | 支持自定义强大的[函数插件](https://github.com/binary-husky/chatgpt_academic/tree/master/crazy_functions),插件支持[热更新](https://github.com/binary-husky/chatgpt_academic/wiki/%E5%87%BD%E6%95%B0%E6%8F%92%E4%BB%B6%E6%8C%87%E5%8D%97)

[自我程序剖析](https://www.bilibili.com/video/BV1cj411A7VW) | [函数插件] [一键读懂](https://github.com/binary-husky/chatgpt_academic/wiki/chatgpt-academic%E9%A1%B9%E7%9B%AE%E8%87%AA%E8%AF%91%E8%A7%A3%E6%8A%A5%E5%91%8A)本项目的源代码

[程序剖析](https://www.bilibili.com/video/BV1cj411A7VW) | [函数插件] 一键可以剖析其他Python/C/C++/Java/Lua/...项目树

读论文 | [函数插件] 一键解读latex论文全文并生成摘要

-Latex全文翻译、润色 | [函数插件] 一键翻译或润色latex论文

+Latex全文[翻译](https://www.bilibili.com/video/BV1nk4y1Y7Js/)、[润色](https://www.bilibili.com/video/BV1FT411H7c5/) | [函数插件] 一键翻译或润色latex论文

批量注释生成 | [函数插件] 一键批量生成函数注释

chat分析报告生成 | [函数插件] 运行后自动生成总结汇报

-Markdown中英互译 | [函数插件] 看到上面5种语言的[README](https://github.com/binary-husky/chatgpt_academic/blob/master/docs/README_EN.md)了吗?

+Markdown[中英互译](https://www.bilibili.com/video/BV1yo4y157jV/) | [函数插件] 看到上面5种语言的[README](https://github.com/binary-husky/chatgpt_academic/blob/master/docs/README_EN.md)了吗?

[arxiv小助手](https://www.bilibili.com/video/BV1LM4y1279X) | [函数插件] 输入arxiv文章url即可一键翻译摘要+下载PDF

[PDF论文全文翻译功能](https://www.bilibili.com/video/BV1KT411x7Wn) | [函数插件] PDF论文提取题目&摘要+翻译全文(多线程)

-[谷歌学术统合小助手](https://www.bilibili.com/video/BV19L411U7ia) | [函数插件] 给定任意谷歌学术搜索页面URL,让gpt帮你选择有趣的文章

-公式/图片/表格显示 | 可以同时显示公式的tex形式和渲染形式,支持公式、代码高亮

-多线程函数插件支持 | 支持多线调用chatgpt,一键处理海量文本或程序

+[谷歌学术统合小助手](https://www.bilibili.com/video/BV19L411U7ia) | [函数插件] 给定任意谷歌学术搜索页面URL,让gpt帮你[写relatedworks](https://www.bilibili.com/video/BV1GP411U7Az/)

+公式/图片/表格显示 | 可以同时显示公式的[tex形式和渲染形式](https://user-images.githubusercontent.com/96192199/230598842-1d7fcddd-815d-40ee-af60-baf488a199df.png),支持公式、代码高亮

+多线程函数插件支持 | 支持多线调用chatgpt,一键处理[海量文本](https://www.bilibili.com/video/BV1FT411H7c5/)或程序

启动暗色gradio[主题](https://github.com/binary-husky/chatgpt_academic/issues/173) | 在浏览器url后面添加```/?__dark-theme=true```可以切换dark主题

[多LLM模型](https://www.bilibili.com/video/BV1wT411p7yf)支持,[API2D](https://api2d.com/)接口支持 | 同时被GPT3.5、GPT4和[清华ChatGLM](https://github.com/THUDM/ChatGLM-6B)伺候的感觉一定会很不错吧?

huggingface免科学上网[在线体验](https://huggingface.co/spaces/qingxu98/gpt-academic) | 登陆huggingface后复制[此空间](https://huggingface.co/spaces/qingxu98/gpt-academic)

@@ -183,6 +183,8 @@ docker run --rm -it --net=host --gpus=all gpt-academic bash

2. 使用WSL2(Windows Subsystem for Linux 子系统)

请访问[部署wiki-2](https://github.com/binary-husky/chatgpt_academic/wiki/%E4%BD%BF%E7%94%A8WSL2%EF%BC%88Windows-Subsystem-for-Linux-%E5%AD%90%E7%B3%BB%E7%BB%9F%EF%BC%89%E9%83%A8%E7%BD%B2)

+3. 如何在二级网址(如`http://localhost/subpath`)下运行

+请访问[FastAPI运行说明](docs/WithFastapi.md)

## 安装-代理配置

1. 常规方法

@@ -278,12 +280,15 @@ docker run --rm -it --net=host --gpus=all gpt-academic bash

+

+

## Todo 与 版本规划:

-- version 3.2+ (todo): 函数插件支持更多参数接口

+- version 3.3+ (todo): NewBing支持

+- version 3.2: 函数插件支持更多参数接口 (保存对话功能, 解读任意语言代码+同时询问任意的LLM组合)

- version 3.1: 支持同时问询多个gpt模型!支持api2d,支持多个apikey负载均衡

- version 3.0: 对chatglm和其他小型llm的支持

- version 2.6: 重构了插件结构,提高了交互性,加入更多插件

diff --git a/config.py b/config.py

index 6da37810..daad6eae 100644

--- a/config.py

+++ b/config.py

@@ -57,6 +57,9 @@ CONCURRENT_COUNT = 100

# [("username", "password"), ("username2", "password2"), ...]

AUTHENTICATION = []

-# 重新URL重新定向,实现更换API_URL的作用(常规情况下,不要修改!!)

+# 重新URL重新定向,实现更换API_URL的作用(常规情况下,不要修改!!)

# 格式 {"https://api.openai.com/v1/chat/completions": "重定向的URL"}

API_URL_REDIRECT = {}

+

+# 如果需要在二级路径下运行(常规情况下,不要修改!!)(需要配合修改main.py才能生效!)

+CUSTOM_PATH = "/"

diff --git a/crazy_functional.py b/crazy_functional.py

index 8e3ab6a8..8b8a3941 100644

--- a/crazy_functional.py

+++ b/crazy_functional.py

@@ -19,12 +19,25 @@ def get_crazy_functions():

from crazy_functions.解析项目源代码 import 解析一个Lua项目

from crazy_functions.解析项目源代码 import 解析一个CSharp项目

from crazy_functions.总结word文档 import 总结word文档

+ from crazy_functions.解析JupyterNotebook import 解析ipynb文件

+ from crazy_functions.对话历史存档 import 对话历史存档

function_plugins = {

"解析整个Python项目": {

"Color": "stop", # 按钮颜色

"Function": HotReload(解析一个Python项目)

},

+ "保存当前的对话": {

+ "AsButton":False,

+ "Function": HotReload(对话历史存档)

+ },

+ "[测试功能] 解析Jupyter Notebook文件": {

+ "Color": "stop",

+ "AsButton":False,

+ "Function": HotReload(解析ipynb文件),

+ "AdvancedArgs": True, # 调用时,唤起高级参数输入区(默认False)

+ "ArgsReminder": "若输入0,则不解析notebook中的Markdown块", # 高级参数输入区的显示提示

+ },

"批量总结Word文档": {

"Color": "stop",

"Function": HotReload(总结word文档)

@@ -168,7 +181,7 @@ def get_crazy_functions():

"AsButton": False, # 加入下拉菜单中

"Function": HotReload(Markdown英译中)

},

-

+

})

###################### 第三组插件 ###########################

@@ -181,7 +194,7 @@ def get_crazy_functions():

"Function": HotReload(下载arxiv论文并翻译摘要)

}

})

-

+

from crazy_functions.联网的ChatGPT import 连接网络回答问题

function_plugins.update({

"连接网络回答问题(先输入问题,再点击按钮,需要访问谷歌)": {

@@ -191,5 +204,25 @@ def get_crazy_functions():

}

})

+ from crazy_functions.解析项目源代码 import 解析任意code项目

+ function_plugins.update({

+ "解析项目源代码(手动指定和筛选源代码文件类型)": {

+ "Color": "stop",

+ "AsButton": False,

+ "AdvancedArgs": True, # 调用时,唤起高级参数输入区(默认False)

+ "ArgsReminder": "输入时用逗号隔开, *代表通配符, 加了^代表不匹配; 不输入代表全部匹配。例如: \"*.c, ^*.cpp, config.toml, ^*.toml\"", # 高级参数输入区的显示提示

+ "Function": HotReload(解析任意code项目)

+ },

+ })

+ from crazy_functions.询问多个大语言模型 import 同时问询_指定模型

+ function_plugins.update({

+ "询问多个GPT模型(手动指定询问哪些模型)": {

+ "Color": "stop",

+ "AsButton": False,

+ "AdvancedArgs": True, # 调用时,唤起高级参数输入区(默认False)

+ "ArgsReminder": "支持任意数量的llm接口,用&符号分隔。例如chatglm&gpt-3.5-turbo&api2d-gpt-4", # 高级参数输入区的显示提示

+ "Function": HotReload(同时问询_指定模型)

+ },

+ })

###################### 第n组插件 ###########################

return function_plugins

diff --git a/crazy_functions/crazy_functions_test.py b/crazy_functions/crazy_functions_test.py

index 7b0f0725..6020fa2f 100644

--- a/crazy_functions/crazy_functions_test.py

+++ b/crazy_functions/crazy_functions_test.py

@@ -108,6 +108,13 @@ def test_联网回答问题():

print("当前问答:", cb[-1][-1].replace("\n"," "))

for i, it in enumerate(cb): print亮蓝(it[0]); print亮黄(it[1])

+def test_解析ipynb文件():

+ from crazy_functions.解析JupyterNotebook import 解析ipynb文件

+ txt = "crazy_functions/test_samples"

+ for cookies, cb, hist, msg in 解析ipynb文件(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ print(cb)

+

+

# test_解析一个Python项目()

# test_Latex英文润色()

# test_Markdown中译英()

@@ -116,9 +123,8 @@ def test_联网回答问题():

# test_总结word文档()

# test_下载arxiv论文并翻译摘要()

# test_解析一个Cpp项目()

-

-test_联网回答问题()

-

+# test_联网回答问题()

+test_解析ipynb文件()

input("程序完成,回车退出。")

print("退出。")

\ No newline at end of file

diff --git a/crazy_functions/对话历史存档.py b/crazy_functions/对话历史存档.py

new file mode 100644

index 00000000..1b232de4

--- /dev/null

+++ b/crazy_functions/对话历史存档.py

@@ -0,0 +1,42 @@

+from toolbox import CatchException, update_ui

+from .crazy_utils import request_gpt_model_in_new_thread_with_ui_alive

+

+def write_chat_to_file(chatbot, file_name=None):

+ """

+ 将对话记录history以Markdown格式写入文件中。如果没有指定文件名,则使用当前时间生成文件名。

+ """

+ import os

+ import time

+ if file_name is None:

+ file_name = 'chatGPT对话历史' + time.strftime("%Y-%m-%d-%H-%M-%S", time.localtime()) + '.html'

+ os.makedirs('./gpt_log/', exist_ok=True)

+ with open(f'./gpt_log/{file_name}', 'w', encoding='utf8') as f:

+ for i, contents in enumerate(chatbot):

+ for content in contents:

+ try: # 这个bug没找到触发条件,暂时先这样顶一下

+ if type(content) != str: content = str(content)

+ except:

+ continue

+ f.write(content)

+ f.write('\n\n')

+ f.write('

\n\n')

+

+ res = '对话历史写入:' + os.path.abspath(f'./gpt_log/{file_name}')

+ print(res)

+ return res

+

+@CatchException

+def 对话历史存档(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ """

+ txt 输入栏用户输入的文本,例如需要翻译的一段话,再例如一个包含了待处理文件的路径

+ llm_kwargs gpt模型参数,如温度和top_p等,一般原样传递下去就行

+ plugin_kwargs 插件模型的参数,暂时没有用武之地

+ chatbot 聊天显示框的句柄,用于显示给用户

+ history 聊天历史,前情提要

+ system_prompt 给gpt的静默提醒

+ web_port 当前软件运行的端口号

+ """

+

+ chatbot.append(("保存当前对话", f"[Local Message] {write_chat_to_file(chatbot)}"))

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面 # 由于请求gpt需要一段时间,我们先及时地做一次界面更新

+

diff --git a/crazy_functions/解析JupyterNotebook.py b/crazy_functions/解析JupyterNotebook.py

new file mode 100644

index 00000000..95a3d696

--- /dev/null

+++ b/crazy_functions/解析JupyterNotebook.py

@@ -0,0 +1,145 @@

+from toolbox import update_ui

+from toolbox import CatchException, report_execption, write_results_to_file

+fast_debug = True

+

+

+class PaperFileGroup():

+ def __init__(self):

+ self.file_paths = []

+ self.file_contents = []

+ self.sp_file_contents = []

+ self.sp_file_index = []

+ self.sp_file_tag = []

+

+ # count_token

+ from request_llm.bridge_all import model_info

+ enc = model_info["gpt-3.5-turbo"]['tokenizer']

+ def get_token_num(txt): return len(

+ enc.encode(txt, disallowed_special=()))

+ self.get_token_num = get_token_num

+

+ def run_file_split(self, max_token_limit=1900):

+ """

+ 将长文本分离开来

+ """

+ for index, file_content in enumerate(self.file_contents):

+ if self.get_token_num(file_content) < max_token_limit:

+ self.sp_file_contents.append(file_content)

+ self.sp_file_index.append(index)

+ self.sp_file_tag.append(self.file_paths[index])

+ else:

+ from .crazy_utils import breakdown_txt_to_satisfy_token_limit_for_pdf

+ segments = breakdown_txt_to_satisfy_token_limit_for_pdf(

+ file_content, self.get_token_num, max_token_limit)

+ for j, segment in enumerate(segments):

+ self.sp_file_contents.append(segment)

+ self.sp_file_index.append(index)

+ self.sp_file_tag.append(

+ self.file_paths[index] + f".part-{j}.txt")

+

+

+

+def parseNotebook(filename, enable_markdown=1):

+ import json

+

+ CodeBlocks = []

+ with open(filename, 'r', encoding='utf-8', errors='replace') as f:

+ notebook = json.load(f)

+ for cell in notebook['cells']:

+ if cell['cell_type'] == 'code' and cell['source']:

+ # remove blank lines

+ cell['source'] = [line for line in cell['source'] if line.strip()

+ != '']

+ CodeBlocks.append("".join(cell['source']))

+ elif enable_markdown and cell['cell_type'] == 'markdown' and cell['source']:

+ cell['source'] = [line for line in cell['source'] if line.strip()

+ != '']

+ CodeBlocks.append("Markdown:"+"".join(cell['source']))

+

+ Code = ""

+ for idx, code in enumerate(CodeBlocks):

+ Code += f"This is {idx+1}th code block: \n"

+ Code += code+"\n"

+

+ return Code

+

+

+def ipynb解释(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt):

+ from .crazy_utils import request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency

+

+ enable_markdown = plugin_kwargs.get("advanced_arg", "1")

+ try:

+ enable_markdown = int(enable_markdown)

+ except ValueError:

+ enable_markdown = 1

+

+ pfg = PaperFileGroup()

+

+ for fp in file_manifest:

+ file_content = parseNotebook(fp, enable_markdown=enable_markdown)

+ pfg.file_paths.append(fp)

+ pfg.file_contents.append(file_content)

+

+ # <-------- 拆分过长的IPynb文件 ---------->

+ pfg.run_file_split(max_token_limit=1024)

+ n_split = len(pfg.sp_file_contents)

+

+ inputs_array = [r"This is a Jupyter Notebook file, tell me about Each Block in Chinese. Focus Just On Code." +

+ r"If a block starts with `Markdown` which means it's a markdown block in ipynbipynb. " +

+ r"Start a new line for a block and block num use Chinese." +

+ f"\n\n{frag}" for frag in pfg.sp_file_contents]

+ inputs_show_user_array = [f"{f}的分析如下" for f in pfg.sp_file_tag]

+ sys_prompt_array = ["You are a professional programmer."] * n_split

+

+ gpt_response_collection = yield from request_gpt_model_multi_threads_with_very_awesome_ui_and_high_efficiency(

+ inputs_array=inputs_array,

+ inputs_show_user_array=inputs_show_user_array,

+ llm_kwargs=llm_kwargs,

+ chatbot=chatbot,

+ history_array=[[""] for _ in range(n_split)],

+ sys_prompt_array=sys_prompt_array,

+ # max_workers=5, # OpenAI所允许的最大并行过载

+ scroller_max_len=80

+ )

+

+ # <-------- 整理结果,退出 ---------->

+ block_result = " \n".join(gpt_response_collection)

+ chatbot.append(("解析的结果如下", block_result))

+ history.extend(["解析的结果如下", block_result])

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+

+ # <-------- 写入文件,退出 ---------->

+ res = write_results_to_file(history)

+ chatbot.append(("完成了吗?", res))

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+

+@CatchException

+def 解析ipynb文件(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ chatbot.append([

+ "函数插件功能?",

+ "对IPynb文件进行解析。Contributor: codycjy."])

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+

+ history = [] # 清空历史

+ import glob

+ import os

+ if os.path.exists(txt):

+ project_folder = txt

+ else:

+ if txt == "":

+ txt = '空空如也的输入栏'

+ report_execption(chatbot, history,

+ a=f"解析项目: {txt}", b=f"找不到本地项目或无权访问: {txt}")

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+ return

+ if txt.endswith('.ipynb'):

+ file_manifest = [txt]

+ else:

+ file_manifest = [f for f in glob.glob(

+ f'{project_folder}/**/*.ipynb', recursive=True)]

+ if len(file_manifest) == 0:

+ report_execption(chatbot, history,

+ a=f"解析项目: {txt}", b=f"找不到任何.ipynb文件: {txt}")

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+ return

+ yield from ipynb解释(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, )

diff --git a/crazy_functions/解析项目源代码.py b/crazy_functions/解析项目源代码.py

index 375b87ae..bfa473ae 100644

--- a/crazy_functions/解析项目源代码.py

+++ b/crazy_functions/解析项目源代码.py

@@ -11,7 +11,7 @@ def 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs,

history_array = []

sys_prompt_array = []

report_part_1 = []

-

+

assert len(file_manifest) <= 512, "源文件太多(超过512个), 请缩减输入文件的数量。或者,您也可以选择删除此行警告,并修改代码拆分file_manifest列表,从而实现分批次处理。"

############################## <第一步,逐个文件分析,多线程> ##################################

for index, fp in enumerate(file_manifest):

@@ -63,10 +63,10 @@ def 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs,

current_iteration_focus = ', '.join([os.path.relpath(fp, project_folder) for index, fp in enumerate(this_iteration_file_manifest)])

i_say = f'根据以上分析,对程序的整体功能和构架重新做出概括。然后用一张markdown表格整理每个文件的功能(包括{previous_iteration_files_string})。'

inputs_show_user = f'根据以上分析,对程序的整体功能和构架重新做出概括,由于输入长度限制,可能需要分组处理,本组文件为 {current_iteration_focus} + 已经汇总的文件组。'

- this_iteration_history = copy.deepcopy(this_iteration_gpt_response_collection)

+ this_iteration_history = copy.deepcopy(this_iteration_gpt_response_collection)

this_iteration_history.append(last_iteration_result)

result = yield from request_gpt_model_in_new_thread_with_ui_alive(

- inputs=i_say, inputs_show_user=inputs_show_user, llm_kwargs=llm_kwargs, chatbot=chatbot,

+ inputs=i_say, inputs_show_user=inputs_show_user, llm_kwargs=llm_kwargs, chatbot=chatbot,

history=this_iteration_history, # 迭代之前的分析

sys_prompt="你是一个程序架构分析师,正在分析一个项目的源代码。")

report_part_2.extend([i_say, result])

@@ -222,8 +222,8 @@ def 解析一个Golang项目(txt, llm_kwargs, plugin_kwargs, chatbot, history, s

yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

return

yield from 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt)

-

-

+

+

@CatchException

def 解析一个Lua项目(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

history = [] # 清空历史,以免输入溢出

@@ -243,9 +243,9 @@ def 解析一个Lua项目(txt, llm_kwargs, plugin_kwargs, chatbot, history, syst

report_execption(chatbot, history, a = f"解析项目: {txt}", b = f"找不到任何lua文件: {txt}")

yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

return

- yield from 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt)

-

-

+ yield from 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt)

+

+

@CatchException

def 解析一个CSharp项目(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

history = [] # 清空历史,以免输入溢出

@@ -263,4 +263,45 @@ def 解析一个CSharp项目(txt, llm_kwargs, plugin_kwargs, chatbot, history, s

report_execption(chatbot, history, a = f"解析项目: {txt}", b = f"找不到任何CSharp文件: {txt}")

yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

return

- yield from 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt)

+ yield from 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt)

+

+

+@CatchException

+def 解析任意code项目(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ txt_pattern = plugin_kwargs.get("advanced_arg")

+ txt_pattern = txt_pattern.replace(",", ",")

+ # 将要匹配的模式(例如: *.c, *.cpp, *.py, config.toml)

+ pattern_include = [_.lstrip(" ,").rstrip(" ,") for _ in txt_pattern.split(",") if _ != "" and not _.strip().startswith("^")]

+ if not pattern_include: pattern_include = ["*"] # 不输入即全部匹配

+ # 将要忽略匹配的文件后缀(例如: ^*.c, ^*.cpp, ^*.py)

+ pattern_except_suffix = [_.lstrip(" ^*.,").rstrip(" ,") for _ in txt_pattern.split(" ") if _ != "" and _.strip().startswith("^*.")]

+ pattern_except_suffix += ['zip', 'rar', '7z', 'tar', 'gz'] # 避免解析压缩文件

+ # 将要忽略匹配的文件名(例如: ^README.md)

+ pattern_except_name = [_.lstrip(" ^*,").rstrip(" ,").replace(".", "\.") for _ in txt_pattern.split(" ") if _ != "" and _.strip().startswith("^") and not _.strip().startswith("^*.")]

+ # 生成正则表达式

+ pattern_except = '/[^/]+\.(' + "|".join(pattern_except_suffix) + ')$'

+ pattern_except += '|/(' + "|".join(pattern_except_name) + ')$' if pattern_except_name != [] else ''

+

+ history.clear()

+ import glob, os, re

+ if os.path.exists(txt):

+ project_folder = txt

+ else:

+ if txt == "": txt = '空空如也的输入栏'

+ report_execption(chatbot, history, a = f"解析项目: {txt}", b = f"找不到本地项目或无权访问: {txt}")

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+ return

+ # 若上传压缩文件, 先寻找到解压的文件夹路径, 从而避免解析压缩文件

+ maybe_dir = [f for f in glob.glob(f'{project_folder}/*') if os.path.isdir(f)]

+ if len(maybe_dir)>0 and maybe_dir[0].endswith('.extract'):

+ extract_folder_path = maybe_dir[0]

+ else:

+ extract_folder_path = project_folder

+ # 按输入的匹配模式寻找上传的非压缩文件和已解压的文件

+ file_manifest = [f for pattern in pattern_include for f in glob.glob(f'{extract_folder_path}/**/{pattern}', recursive=True) if "" != extract_folder_path and \

+ os.path.isfile(f) and (not re.search(pattern_except, f) or pattern.endswith('.' + re.search(pattern_except, f).group().split('.')[-1]))]

+ if len(file_manifest) == 0:

+ report_execption(chatbot, history, a = f"解析项目: {txt}", b = f"找不到任何文件: {txt}")

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面

+ return

+ yield from 解析源代码新(file_manifest, project_folder, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt)

\ No newline at end of file

diff --git a/crazy_functions/询问多个大语言模型.py b/crazy_functions/询问多个大语言模型.py

index c28f2aae..5cf239e1 100644

--- a/crazy_functions/询问多个大语言模型.py

+++ b/crazy_functions/询问多个大语言模型.py

@@ -25,6 +25,35 @@ def 同时问询(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt

retry_times_at_unknown_error=0

)

+ history.append(txt)

+ history.append(gpt_say)

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面 # 界面更新

+

+

+@CatchException

+def 同时问询_指定模型(txt, llm_kwargs, plugin_kwargs, chatbot, history, system_prompt, web_port):

+ """

+ txt 输入栏用户输入的文本,例如需要翻译的一段话,再例如一个包含了待处理文件的路径

+ llm_kwargs gpt模型参数,如温度和top_p等,一般原样传递下去就行

+ plugin_kwargs 插件模型的参数,如温度和top_p等,一般原样传递下去就行

+ chatbot 聊天显示框的句柄,用于显示给用户

+ history 聊天历史,前情提要

+ system_prompt 给gpt的静默提醒

+ web_port 当前软件运行的端口号

+ """

+ history = [] # 清空历史,以免输入溢出

+ chatbot.append((txt, "正在同时咨询ChatGPT和ChatGLM……"))

+ yield from update_ui(chatbot=chatbot, history=history) # 刷新界面 # 由于请求gpt需要一段时间,我们先及时地做一次界面更新

+

+ # llm_kwargs['llm_model'] = 'chatglm&gpt-3.5-turbo&api2d-gpt-3.5-turbo' # 支持任意数量的llm接口,用&符号分隔

+ llm_kwargs['llm_model'] = plugin_kwargs.get("advanced_arg", 'chatglm&gpt-3.5-turbo') # 'chatglm&gpt-3.5-turbo' # 支持任意数量的llm接口,用&符号分隔

+ gpt_say = yield from request_gpt_model_in_new_thread_with_ui_alive(

+ inputs=txt, inputs_show_user=txt,

+ llm_kwargs=llm_kwargs, chatbot=chatbot, history=history,

+ sys_prompt=system_prompt,

+ retry_times_at_unknown_error=0

+ )

+

history.append(txt)

history.append(gpt_say)

yield from update_ui(chatbot=chatbot, history=history) # 刷新界面 # 界面更新

\ No newline at end of file

diff --git a/crazy_functions/谷歌检索小助手.py b/crazy_functions/谷歌检索小助手.py

index 94ef2563..b9e1f8e3 100644

--- a/crazy_functions/谷歌检索小助手.py

+++ b/crazy_functions/谷歌检索小助手.py

@@ -70,6 +70,7 @@ def 谷歌检索小助手(txt, llm_kwargs, plugin_kwargs, chatbot, history, syst

# 尝试导入依赖,如果缺少依赖,则给出安装建议

try:

import arxiv

+ import math

from bs4 import BeautifulSoup

except:

report_execption(chatbot, history,

@@ -80,25 +81,26 @@ def 谷歌检索小助手(txt, llm_kwargs, plugin_kwargs, chatbot, history, syst

# 清空历史,以免输入溢出

history = []

-

meta_paper_info_list = yield from get_meta_information(txt, chatbot, history)

+ batchsize = 5

+ for batch in range(math.ceil(len(meta_paper_info_list)/batchsize)):

+ if len(meta_paper_info_list[:batchsize]) > 0:

+ i_say = "下面是一些学术文献的数据,提取出以下内容:" + \

+ "1、英文题目;2、中文题目翻译;3、作者;4、arxiv公开(is_paper_in_arxiv);4、引用数量(cite);5、中文摘要翻译。" + \

+ f"以下是信息源:{str(meta_paper_info_list[:batchsize])}"

- if len(meta_paper_info_list[:10]) > 0:

- i_say = "下面是一些学术文献的数据,请从中提取出以下内容。" + \

- "1、英文题目;2、中文题目翻译;3、作者;4、arxiv公开(is_paper_in_arxiv);4、引用数量(cite);5、中文摘要翻译。" + \

- f"以下是信息源:{str(meta_paper_info_list[:10])}"

+ inputs_show_user = f"请分析此页面中出现的所有文章:{txt},这是第{batch+1}批"

+ gpt_say = yield from request_gpt_model_in_new_thread_with_ui_alive(

+ inputs=i_say, inputs_show_user=inputs_show_user,

+ llm_kwargs=llm_kwargs, chatbot=chatbot, history=[],

+ sys_prompt="你是一个学术翻译,请从数据中提取信息。你必须使用Markdown表格。你必须逐个文献进行处理。"

+ )

- inputs_show_user = f"请分析此页面中出现的所有文章:{txt}"

- gpt_say = yield from request_gpt_model_in_new_thread_with_ui_alive(

- inputs=i_say, inputs_show_user=inputs_show_user,

- llm_kwargs=llm_kwargs, chatbot=chatbot, history=[],

- sys_prompt="你是一个学术翻译,请从数据中提取信息。你必须使用Markdown格式。你必须逐个文献进行处理。"

- )

+ history.extend([ f"第{batch+1}批", gpt_say ])

+ meta_paper_info_list = meta_paper_info_list[batchsize:]

- history.extend([ "第一批", gpt_say ])

- meta_paper_info_list = meta_paper_info_list[10:]

-

- chatbot.append(["状态?", "已经全部完成"])

+ chatbot.append(["状态?",

+ "已经全部完成,您可以试试让AI写一个Related Works,例如您可以继续输入Write a \"Related Works\" section about \"你搜索的研究领域\" for me."])

msg = '正常'

yield from update_ui(chatbot=chatbot, history=history, msg=msg) # 刷新界面

res = write_results_to_file(history)

diff --git a/docs/WithFastapi.md b/docs/WithFastapi.md

new file mode 100644

index 00000000..188b5271

--- /dev/null

+++ b/docs/WithFastapi.md

@@ -0,0 +1,43 @@

+# Running with fastapi

+

+We currently support fastapi in order to solve sub-path deploy issue.

+

+1. change CUSTOM_PATH setting in `config.py`

+

+``` sh

+nano config.py

+```

+

+2. Edit main.py

+

+```diff

+ auto_opentab_delay()

+ - demo.queue(concurrency_count=CONCURRENT_COUNT).launch(server_name="0.0.0.0", server_port=PORT, auth=AUTHENTICATION, favicon_path="docs/logo.png")

+ + demo.queue(concurrency_count=CONCURRENT_COUNT)

+

+ - # 如果需要在二级路径下运行

+ - # CUSTOM_PATH, = get_conf('CUSTOM_PATH')

+ - # if CUSTOM_PATH != "/":

+ - # from toolbox import run_gradio_in_subpath

+ - # run_gradio_in_subpath(demo, auth=AUTHENTICATION, port=PORT, custom_path=CUSTOM_PATH)

+ - # else:

+ - # demo.launch(server_name="0.0.0.0", server_port=PORT, auth=AUTHENTICATION, favicon_path="docs/logo.png")

+

+ + 如果需要在二级路径下运行

+ + CUSTOM_PATH, = get_conf('CUSTOM_PATH')

+ + if CUSTOM_PATH != "/":

+ + from toolbox import run_gradio_in_subpath

+ + run_gradio_in_subpath(demo, auth=AUTHENTICATION, port=PORT, custom_path=CUSTOM_PATH)

+ + else:

+ + demo.launch(server_name="0.0.0.0", server_port=PORT, auth=AUTHENTICATION, favicon_path="docs/logo.png")

+

+if __name__ == "__main__":

+ main()

+```

+

+

+3. Go!

+

+``` sh

+python main.py

+```

diff --git a/main.py b/main.py

index 6ec26a57..d100d4f9 100644

--- a/main.py

+++ b/main.py

@@ -45,7 +45,7 @@ def main():

gr_L1 = lambda: gr.Row().style()

gr_L2 = lambda scale: gr.Column(scale=scale)

- if LAYOUT == "TOP-DOWN":

+ if LAYOUT == "TOP-DOWN":

gr_L1 = lambda: DummyWith()

gr_L2 = lambda scale: gr.Row()

CHATBOT_HEIGHT /= 2

@@ -89,9 +89,12 @@ def main():

with gr.Row():

with gr.Accordion("更多函数插件", open=True):

dropdown_fn_list = [k for k in crazy_fns.keys() if not crazy_fns[k].get("AsButton", True)]

- with gr.Column(scale=1):

+ with gr.Row():

dropdown = gr.Dropdown(dropdown_fn_list, value=r"打开插件列表", label="").style(container=False)

- with gr.Column(scale=1):

+ with gr.Row():

+ plugin_advanced_arg = gr.Textbox(show_label=True, label="高级参数输入区", visible=False,

+ placeholder="这里是特殊函数插件的高级参数输入区").style(container=False)

+ with gr.Row():

switchy_bt = gr.Button(r"请先从插件列表中选择", variant="secondary")

with gr.Row():

with gr.Accordion("点击展开“文件上传区”。上传本地文件可供红色函数插件调用。", open=False) as area_file_up:

@@ -101,7 +104,7 @@ def main():

top_p = gr.Slider(minimum=-0, maximum=1.0, value=1.0, step=0.01,interactive=True, label="Top-p (nucleus sampling)",)

temperature = gr.Slider(minimum=-0, maximum=2.0, value=1.0, step=0.01, interactive=True, label="Temperature",)

max_length_sl = gr.Slider(minimum=256, maximum=4096, value=512, step=1, interactive=True, label="Local LLM MaxLength",)

- checkboxes = gr.CheckboxGroup(["基础功能区", "函数插件区", "底部输入区", "输入清除键"], value=["基础功能区", "函数插件区"], label="显示/隐藏功能区")

+ checkboxes = gr.CheckboxGroup(["基础功能区", "函数插件区", "底部输入区", "输入清除键", "插件参数区"], value=["基础功能区", "函数插件区"], label="显示/隐藏功能区")

md_dropdown = gr.Dropdown(AVAIL_LLM_MODELS, value=LLM_MODEL, label="更换LLM模型/请求源").style(container=False)

gr.Markdown(description)

@@ -123,11 +126,12 @@ def main():

ret.update({area_input_secondary: gr.update(visible=("底部输入区" in a))})

ret.update({clearBtn: gr.update(visible=("输入清除键" in a))})

ret.update({clearBtn2: gr.update(visible=("输入清除键" in a))})

+ ret.update({plugin_advanced_arg: gr.update(visible=("插件参数区" in a))})

if "底部输入区" in a: ret.update({txt: gr.update(value="")})

return ret

- checkboxes.select(fn_area_visibility, [checkboxes], [area_basic_fn, area_crazy_fn, area_input_primary, area_input_secondary, txt, txt2, clearBtn, clearBtn2] )

+ checkboxes.select(fn_area_visibility, [checkboxes], [area_basic_fn, area_crazy_fn, area_input_primary, area_input_secondary, txt, txt2, clearBtn, clearBtn2, plugin_advanced_arg] )

# 整理反复出现的控件句柄组合

- input_combo = [cookies, max_length_sl, md_dropdown, txt, txt2, top_p, temperature, chatbot, history, system_prompt]

+ input_combo = [cookies, max_length_sl, md_dropdown, txt, txt2, top_p, temperature, chatbot, history, system_prompt, plugin_advanced_arg]

output_combo = [cookies, chatbot, history, status]

predict_args = dict(fn=ArgsGeneralWrapper(predict), inputs=input_combo, outputs=output_combo)

# 提交按钮、重置按钮

@@ -154,14 +158,19 @@ def main():

# 函数插件-下拉菜单与随变按钮的互动

def on_dropdown_changed(k):

variant = crazy_fns[k]["Color"] if "Color" in crazy_fns[k] else "secondary"

- return {switchy_bt: gr.update(value=k, variant=variant)}

- dropdown.select(on_dropdown_changed, [dropdown], [switchy_bt] )

+ ret = {switchy_bt: gr.update(value=k, variant=variant)}

+ if crazy_fns[k].get("AdvancedArgs", False): # 是否唤起高级插件参数区

+ ret.update({plugin_advanced_arg: gr.update(visible=True, label=f"插件[{k}]的高级参数说明:" + crazy_fns[k].get("ArgsReminder", [f"没有提供高级参数功能说明"]))})

+ else:

+ ret.update({plugin_advanced_arg: gr.update(visible=False, label=f"插件[{k}]不需要高级参数。")})

+ return ret

+ dropdown.select(on_dropdown_changed, [dropdown], [switchy_bt, plugin_advanced_arg] )

def on_md_dropdown_changed(k):

return {chatbot: gr.update(label="当前模型:"+k)}

md_dropdown.select(on_md_dropdown_changed, [md_dropdown], [chatbot] )

# 随变按钮的回调函数注册

def route(k, *args, **kwargs):

- if k in [r"打开插件列表", r"请先从插件列表中选择"]: return

+ if k in [r"打开插件列表", r"请先从插件列表中选择"]: return

yield from ArgsGeneralWrapper(crazy_fns[k]["Function"])(*args, **kwargs)

click_handle = switchy_bt.click(route,[switchy_bt, *input_combo, gr.State(PORT)], output_combo)

click_handle.then(on_report_generated, [file_upload, chatbot], [file_upload, chatbot])

@@ -179,7 +188,7 @@ def main():

print(f"如果浏览器没有自动打开,请复制并转到以下URL:")

print(f"\t(亮色主题): http://localhost:{PORT}")

print(f"\t(暗色主题): http://localhost:{PORT}/?__dark-theme=true")

- def open():

+ def open():

time.sleep(2) # 打开浏览器

webbrowser.open_new_tab(f"http://localhost:{PORT}/?__dark-theme=true")

threading.Thread(target=open, name="open-browser", daemon=True).start()

@@ -189,5 +198,13 @@ def main():

auto_opentab_delay()

demo.queue(concurrency_count=CONCURRENT_COUNT).launch(server_name="0.0.0.0", share=False, favicon_path="docs/logo.png")

+ # 如果需要在二级路径下运行

+ # CUSTOM_PATH, = get_conf('CUSTOM_PATH')

+ # if CUSTOM_PATH != "/":

+ # from toolbox import run_gradio_in_subpath

+ # run_gradio_in_subpath(demo, auth=AUTHENTICATION, port=PORT, custom_path=CUSTOM_PATH)

+ # else:

+ # demo.launch(server_name="0.0.0.0", server_port=PORT, auth=AUTHENTICATION, favicon_path="docs/logo.png")

+

if __name__ == "__main__":

main()

diff --git a/request_llm/README.md b/request_llm/README.md

index 973adea1..4a912d10 100644

--- a/request_llm/README.md

+++ b/request_llm/README.md

@@ -1,4 +1,4 @@

-# 如何使用其他大语言模型(v3.0分支测试中)

+# 如何使用其他大语言模型

## ChatGLM

@@ -15,7 +15,7 @@ LLM_MODEL = "chatglm"

---

-## Text-Generation-UI (TGUI)

+## Text-Generation-UI (TGUI,调试中,暂不可用)

### 1. 部署TGUI

``` sh

diff --git a/request_llm/bridge_all.py b/request_llm/bridge_all.py

index d1bc5189..311dc6f4 100644

--- a/request_llm/bridge_all.py

+++ b/request_llm/bridge_all.py

@@ -1,12 +1,12 @@

"""

- 该文件中主要包含2个函数

+ 该文件中主要包含2个函数,是所有LLM的通用接口,它们会继续向下调用更底层的LLM模型,处理多模型并行等细节

- 不具备多线程能力的函数:

- 1. predict: 正常对话时使用,具备完备的交互功能,不可多线程

+ 不具备多线程能力的函数:正常对话时使用,具备完备的交互功能,不可多线程

+ 1. predict(...)

- 具备多线程调用能力的函数

- 2. predict_no_ui_long_connection:在实验过程中发现调用predict_no_ui处理长文档时,和openai的连接容易断掉,这个函数用stream的方式解决这个问题,同样支持多线程

+ 具备多线程调用能力的函数:在函数插件中被调用,灵活而简洁

+ 2. predict_no_ui_long_connection(...)

"""

import tiktoken

from functools import lru_cache

@@ -210,7 +210,7 @@ def predict_no_ui_long_connection(inputs, llm_kwargs, history, sys_prompt, obser

return_string_collect.append( f"【{str(models[i])} 说】: {future.result()} " )

window_mutex[-1] = False # stop mutex thread

- res = '

\n\n---\n\n'.join(return_string_collect)

+ res = '

\n\n---\n\n'.join(return_string_collect)

return res

diff --git a/request_llm/bridge_chatglm.py b/request_llm/bridge_chatglm.py

index 7af28356..fb44043c 100644

--- a/request_llm/bridge_chatglm.py

+++ b/request_llm/bridge_chatglm.py

@@ -32,6 +32,7 @@ class GetGLMHandle(Process):

return self.chatglm_model is not None

def run(self):

+ # 子进程执行

# 第一次运行,加载参数

retry = 0

while True:

@@ -53,17 +54,24 @@ class GetGLMHandle(Process):

self.child.send('[Local Message] Call ChatGLM fail 不能正常加载ChatGLM的参数。')

raise RuntimeError("不能正常加载ChatGLM的参数!")

- # 进入任务等待状态

while True:

+ # 进入任务等待状态

kwargs = self.child.recv()

+ # 收到消息,开始请求

try:

for response, history in self.chatglm_model.stream_chat(self.chatglm_tokenizer, **kwargs):

self.child.send(response)

+ # # 中途接收可能的终止指令(如果有的话)

+ # if self.child.poll():

+ # command = self.child.recv()

+ # if command == '[Terminate]': break

except:

self.child.send('[Local Message] Call ChatGLM fail.')

+ # 请求处理结束,开始下一个循环

self.child.send('[Finish]')

def stream_chat(self, **kwargs):

+ # 主进程执行

self.parent.send(kwargs)

while True:

res = self.parent.recv()

@@ -92,8 +100,8 @@ def predict_no_ui_long_connection(inputs, llm_kwargs, history=[], sys_prompt="",

# chatglm 没有 sys_prompt 接口,因此把prompt加入 history

history_feedin = []

+ history_feedin.append(["What can I do?", sys_prompt])

for i in range(len(history)//2):

- history_feedin.append(["What can I do?", sys_prompt] )

history_feedin.append([history[2*i], history[2*i+1]] )

watch_dog_patience = 5 # 看门狗 (watchdog) 的耐心, 设置5秒即可

@@ -130,11 +138,17 @@ def predict(inputs, llm_kwargs, plugin_kwargs, chatbot, history=[], system_promp

if "PreProcess" in core_functional[additional_fn]: inputs = core_functional[additional_fn]["PreProcess"](inputs) # 获取预处理函数(如果有的话)

inputs = core_functional[additional_fn]["Prefix"] + inputs + core_functional[additional_fn]["Suffix"]

+ # 处理历史信息

history_feedin = []

+ history_feedin.append(["What can I do?", system_prompt] )

for i in range(len(history)//2):

- history_feedin.append(["What can I do?", system_prompt] )

history_feedin.append([history[2*i], history[2*i+1]] )

+ # 开始接收chatglm的回复

for response in glm_handle.stream_chat(query=inputs, history=history_feedin, max_length=llm_kwargs['max_length'], top_p=llm_kwargs['top_p'], temperature=llm_kwargs['temperature']):

chatbot[-1] = (inputs, response)

- yield from update_ui(chatbot=chatbot, history=history)

\ No newline at end of file

+ yield from update_ui(chatbot=chatbot, history=history)

+

+ # 总结输出

+ history.extend([inputs, response])

+ yield from update_ui(chatbot=chatbot, history=history)

diff --git a/request_llm/bridge_chatgpt.py b/request_llm/bridge_chatgpt.py

index c1a900b1..5e32f452 100644

--- a/request_llm/bridge_chatgpt.py

+++ b/request_llm/bridge_chatgpt.py

@@ -21,7 +21,7 @@ import importlib

# config_private.py放自己的秘密如API和代理网址

# 读取时首先看是否存在私密的config_private配置文件(不受git管控),如果有,则覆盖原config文件

-from toolbox import get_conf, update_ui, is_any_api_key, select_api_key, what_keys

+from toolbox import get_conf, update_ui, is_any_api_key, select_api_key, what_keys, clip_history

proxies, API_KEY, TIMEOUT_SECONDS, MAX_RETRY = \

get_conf('proxies', 'API_KEY', 'TIMEOUT_SECONDS', 'MAX_RETRY')

@@ -145,7 +145,7 @@ def predict(inputs, llm_kwargs, plugin_kwargs, chatbot, history=[], system_promp

yield from update_ui(chatbot=chatbot, history=history, msg="api-key不满足要求") # 刷新界面

return

- history.append(inputs); history.append(" ")

+ history.append(inputs); history.append("")

retry = 0

while True:

@@ -198,14 +198,17 @@ def predict(inputs, llm_kwargs, plugin_kwargs, chatbot, history=[], system_promp

chunk_decoded = chunk.decode()

error_msg = chunk_decoded

if "reduce the length" in error_msg:

- chatbot[-1] = (chatbot[-1][0], "[Local Message] Reduce the length. 本次输入过长,或历史数据过长. 历史缓存数据现已释放,您可以请再次尝试.")

- history = [] # 清除历史

+ if len(history) >= 2: history[-1] = ""; history[-2] = "" # 清除当前溢出的输入:history[-2] 是本次输入, history[-1] 是本次输出

+ history = clip_history(inputs=inputs, history=history, tokenizer=model_info[llm_kwargs['llm_model']]['tokenizer'],

+ max_token_limit=(model_info[llm_kwargs['llm_model']]['max_token'])) # history至少释放二分之一

+ chatbot[-1] = (chatbot[-1][0], "[Local Message] Reduce the length. 本次输入过长, 或历史数据过长. 历史缓存数据已部分释放, 您可以请再次尝试. (若再次失败则更可能是因为输入过长.)")

+ # history = [] # 清除历史

elif "does not exist" in error_msg:

- chatbot[-1] = (chatbot[-1][0], f"[Local Message] Model {llm_kwargs['llm_model']} does not exist. 模型不存在,或者您没有获得体验资格.")

+ chatbot[-1] = (chatbot[-1][0], f"[Local Message] Model {llm_kwargs['llm_model']} does not exist. 模型不存在, 或者您没有获得体验资格.")

elif "Incorrect API key" in error_msg:

- chatbot[-1] = (chatbot[-1][0], "[Local Message] Incorrect API key. OpenAI以提供了不正确的API_KEY为由,拒绝服务.")

+ chatbot[-1] = (chatbot[-1][0], "[Local Message] Incorrect API key. OpenAI以提供了不正确的API_KEY为由, 拒绝服务.")

elif "exceeded your current quota" in error_msg:

- chatbot[-1] = (chatbot[-1][0], "[Local Message] You exceeded your current quota. OpenAI以账户额度不足为由,拒绝服务.")

+ chatbot[-1] = (chatbot[-1][0], "[Local Message] You exceeded your current quota. OpenAI以账户额度不足为由, 拒绝服务.")

elif "bad forward key" in error_msg:

chatbot[-1] = (chatbot[-1][0], "[Local Message] Bad forward key. API2D账户额度不足.")

elif "Not enough point" in error_msg:

diff --git a/toolbox.py b/toolbox.py

index d2c9e6ec..c9dc2070 100644

--- a/toolbox.py

+++ b/toolbox.py

@@ -24,23 +24,23 @@ def ArgsGeneralWrapper(f):

"""

装饰器函数,用于重组输入参数,改变输入参数的顺序与结构。

"""

- def decorated(cookies, max_length, llm_model, txt, txt2, top_p, temperature, chatbot, history, system_prompt, *args):

+ def decorated(cookies, max_length, llm_model, txt, txt2, top_p, temperature, chatbot, history, system_prompt, plugin_advanced_arg, *args):

txt_passon = txt

if txt == "" and txt2 != "": txt_passon = txt2

# 引入一个有cookie的chatbot

cookies.update({

- 'top_p':top_p,

+ 'top_p':top_p,

'temperature':temperature,

})

llm_kwargs = {

'api_key': cookies['api_key'],

'llm_model': llm_model,

- 'top_p':top_p,

+ 'top_p':top_p,

'max_length': max_length,

'temperature':temperature,

}

plugin_kwargs = {

- # 目前还没有

+ "advanced_arg": plugin_advanced_arg,

}

chatbot_with_cookie = ChatBotWithCookies(cookies)

chatbot_with_cookie.write_list(chatbot)

@@ -219,7 +219,7 @@ def markdown_convertion(txt):

return content

else:

return tex2mathml_catch_exception(content)

-

+

def markdown_bug_hunt(content):

"""

解决一个mdx_math的bug(单$包裹begin命令时多余\n', '')

return content

-

+

if ('$' in txt) and ('```' not in txt): # 有$标识的公式符号,且没有代码段```的标识

# convert everything to html format

@@ -248,7 +248,7 @@ def markdown_convertion(txt):

def close_up_code_segment_during_stream(gpt_reply):

"""

在gpt输出代码的中途(输出了前面的```,但还没输出完后面的```),补上后面的```

-

+

Args:

gpt_reply (str): GPT模型返回的回复字符串。

@@ -511,7 +511,7 @@ class DummyWith():

它的作用是……额……没用,即在代码结构不变得情况下取代其他的上下文管理器。

上下文管理器是一种Python对象,用于与with语句一起使用,

以确保一些资源在代码块执行期间得到正确的初始化和清理。

- 上下文管理器必须实现两个方法,分别为 __enter__()和 __exit__()。

+ 上下文管理器必须实现两个方法,分别为 __enter__()和 __exit__()。

在上下文执行开始的情况下,__enter__()方法会在代码块被执行前被调用,

而在上下文执行结束时,__exit__()方法则会被调用。

"""

@@ -520,3 +520,83 @@ class DummyWith():

def __exit__(self, exc_type, exc_value, traceback):

return

+

+def run_gradio_in_subpath(demo, auth, port, custom_path):

+ def is_path_legal(path: str)->bool:

+ '''

+ check path for sub url

+ path: path to check

+ return value: do sub url wrap

+ '''

+ if path == "/": return True

+ if len(path) == 0:

+ print("ilegal custom path: {}\npath must not be empty\ndeploy on root url".format(path))

+ return False

+ if path[0] == '/':

+ if path[1] != '/':

+ print("deploy on sub-path {}".format(path))

+ return True

+ return False

+ print("ilegal custom path: {}\npath should begin with \'/\'\ndeploy on root url".format(path))

+ return False

+

+ if not is_path_legal(custom_path): raise RuntimeError('Ilegal custom path')

+ import uvicorn

+ import gradio as gr

+ from fastapi import FastAPI

+ app = FastAPI()

+ if custom_path != "/":

+ @app.get("/")

+ def read_main():

+ return {"message": f"Gradio is running at: {custom_path}"}

+ app = gr.mount_gradio_app(app, demo, path=custom_path)

+ uvicorn.run(app, host="0.0.0.0", port=port) # , auth=auth

+

+

+def clip_history(inputs, history, tokenizer, max_token_limit):

+ """

+ reduce the length of history by clipping.

+ this function search for the longest entries to clip, little by little,

+ until the number of token of history is reduced under threshold.

+ 通过裁剪来缩短历史记录的长度。

+ 此函数逐渐地搜索最长的条目进行剪辑,

+ 直到历史记录的标记数量降低到阈值以下。

+ """

+ import numpy as np

+ from request_llm.bridge_all import model_info

+ def get_token_num(txt):

+ return len(tokenizer.encode(txt, disallowed_special=()))

+ input_token_num = get_token_num(inputs)

+ if input_token_num < max_token_limit * 3 / 4:

+ # 当输入部分的token占比小于限制的3/4时,裁剪时

+ # 1. 把input的余量留出来

+ max_token_limit = max_token_limit - input_token_num

+ # 2. 把输出用的余量留出来

+ max_token_limit = max_token_limit - 128

+ # 3. 如果余量太小了,直接清除历史

+ if max_token_limit < 128:

+ history = []

+ return history

+ else:

+ # 当输入部分的token占比 > 限制的3/4时,直接清除历史

+ history = []

+ return history

+

+ everything = ['']

+ everything.extend(history)

+ n_token = get_token_num('\n'.join(everything))

+ everything_token = [get_token_num(e) for e in everything]

+

+ # 截断时的颗粒度

+ delta = max(everything_token) // 16

+

+ while n_token > max_token_limit:

+ where = np.argmax(everything_token)

+ encoded = tokenizer.encode(everything[where], disallowed_special=())

+ clipped_encoded = encoded[:len(encoded)-delta]

+ everything[where] = tokenizer.decode(clipped_encoded)[:-1] # -1 to remove the may-be illegal char

+ everything_token[where] = get_token_num(everything[where])

+ n_token = get_token_num('\n'.join(everything))

+

+ history = everything[1:]

+ return history

diff --git a/version b/version

index bb462e21..a2a877b7 100644

--- a/version

+++ b/version

@@ -1,5 +1,5 @@

{

- "version": 3.1,

+ "version": 3.2,

"show_feature": true,

- "new_feature": "添加支持清华ChatGLM和GPT-4 <-> 改进架构,支持与多个LLM模型同时对话 <-> 添加支持API2D(国内,可支持gpt4)<-> 支持多API-KEY负载均衡(并列填写,逗号分割) <-> 添加输入区文本清除按键"

+ "new_feature": "保存对话功能 <-> 解读任意语言代码+同时询问任意的LLM组合 <-> 添加联网(Google)回答问题插件 <-> 修复ChatGLM上下文BUG <-> 添加支持清华ChatGLM和GPT-4 <-> 改进架构,支持与多个LLM模型同时对话 <-> 添加支持API2D(国内,可支持gpt4)"

}

+

+ +

+

+

+ +

+